with support from the Arts Faculty Conference Support Scheme.

Rayson Huang Theatre, HKU

1 MAR 2024 | FRI | 4:00PM-6:30PM

2 MAR 2024 | SAT | 9:30AM-6:30PM

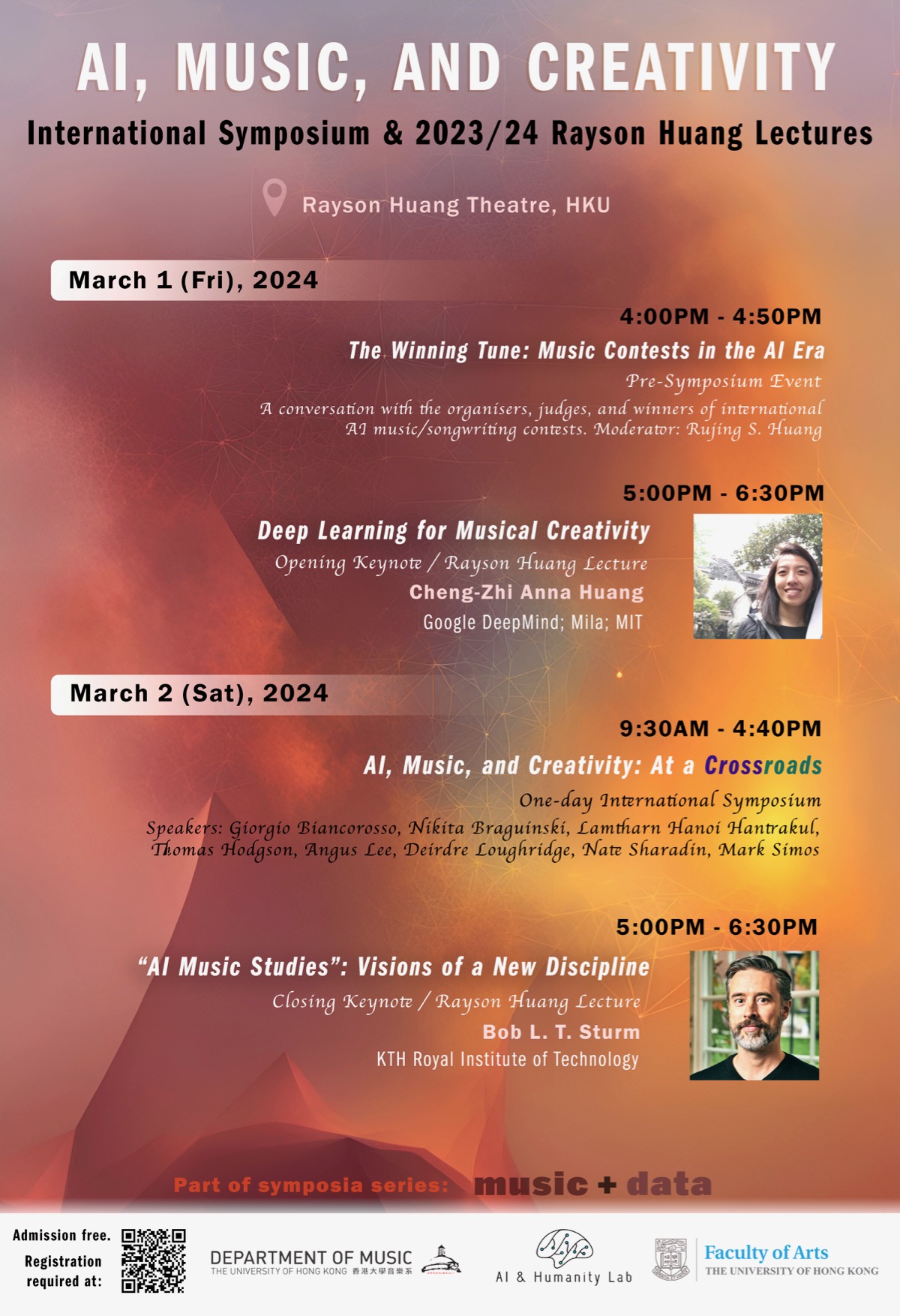

AI, Music, and Creativity: At a Crossroads is an international symposium jointly hosted by the HKU Department of Music and the AI & Humanity Lab at the Department of Philosophy. This event is a direct response to the intensifying debate over AI’s wide-ranging impact on the arts and the cultural and creative industries. In bringing together leading scholars and practitioners engaging with the topic from both industry and academia and across the disciplinary spectrum, the symposium aims to promote balanced exchange between technological-algorithmic and cultural-critical perspectives over the pressing issues surrounding AI’s disruption and/or transformation of music and the creative fields at large. The opening and closing keynote addresses will be delivered by Prof. Cheng-Zhi Anna Huang (Google DeepMind; Mila; MIT) and Prof. Bob L. T. Sturm (KTH Royal Institute of Technology), this year’s Rayson Huang Lecturers. Latest updates of a forthcoming peer-reviewed, interdisciplinary journal that brings into dialogue music studies (broadly conceived) and data science will also be announced at the event.

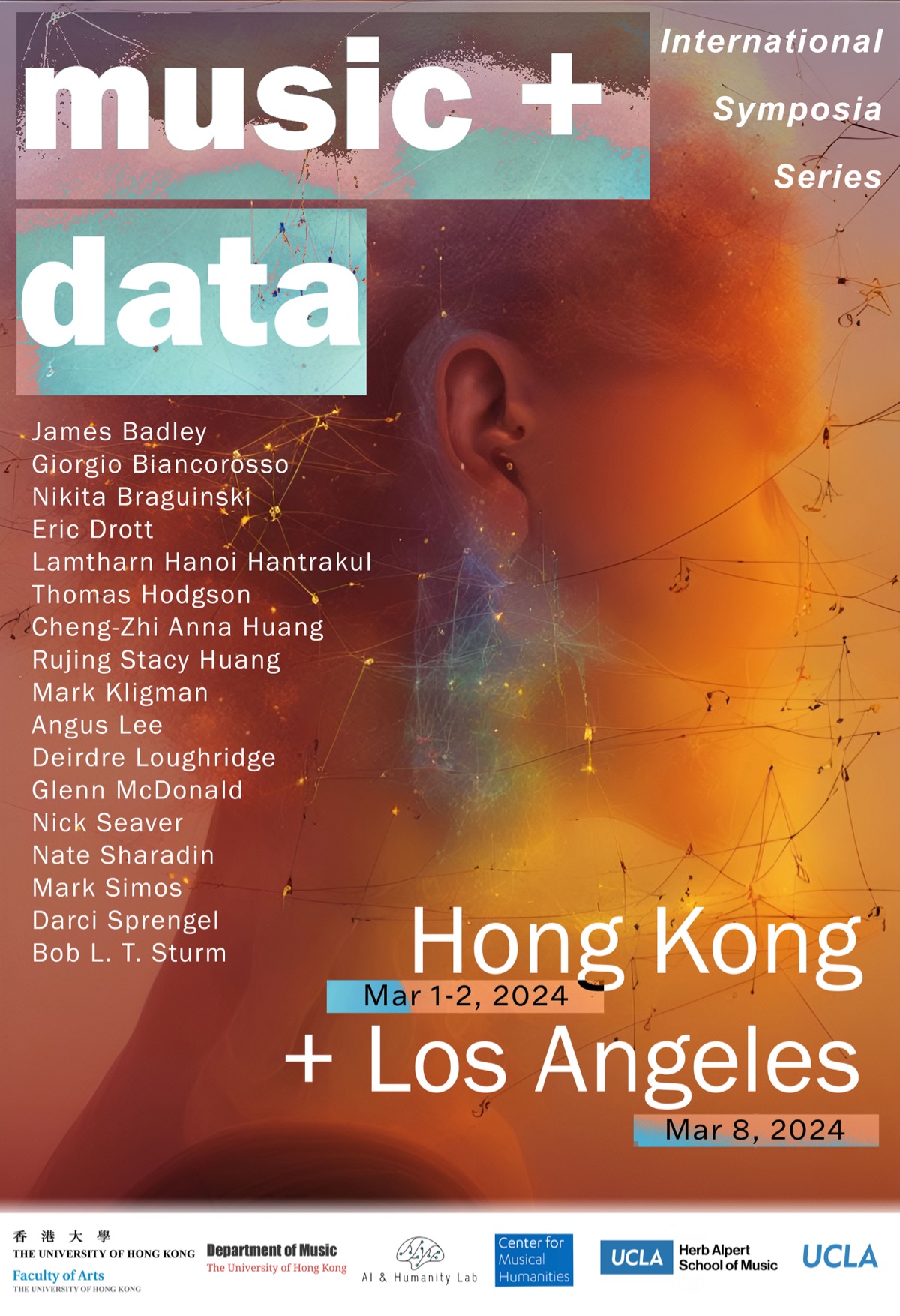

This symposium is programmed as part of Music + Data, an international, dual-sited symposia series featuring two linked events, with the first taking place at HKU (March 1-2, 2024) and the second at UCLA (March 8, 2024). Admission free. Registration is required.

Register here.

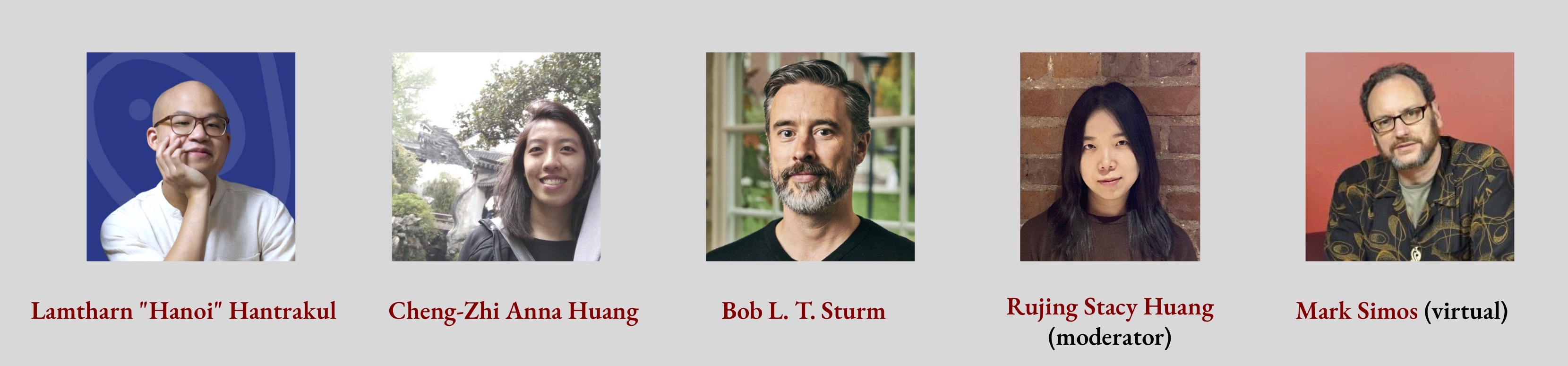

A conversation with the organisers, judges, and winners of international AI music/songwriting contests.

Moderator: Rujing Stacy Huang (The University of Hong Kong)

1 MAR 2024 | FRI | 4:00PM-4:50PM

2 MAR 2024 | SAT | 9:30AM-4:40PM

Welcome Remarks

9:30AM-9:50AM

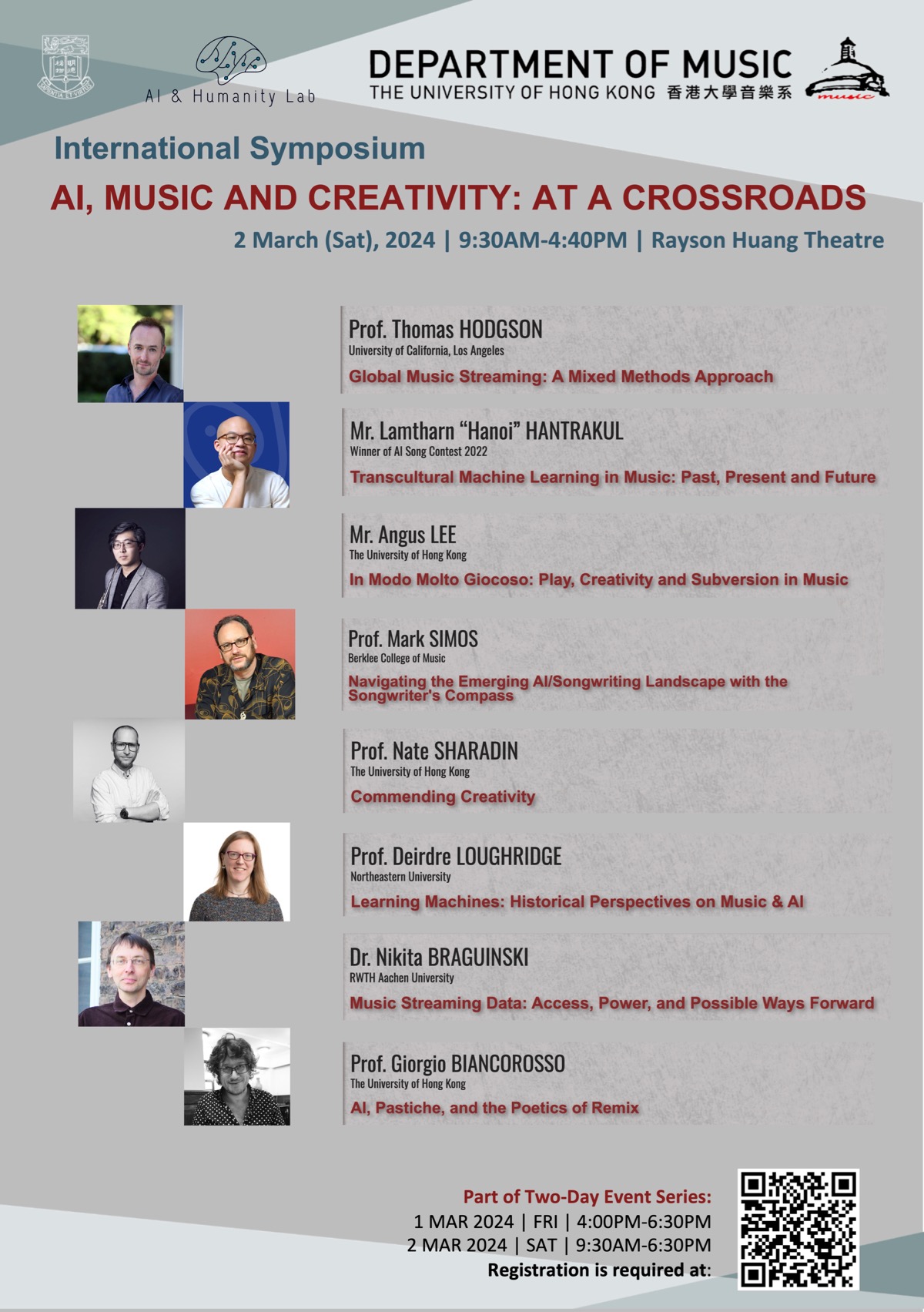

PANEL1:

9:50AM-12:30PM

Music AI Beyond Borders:

The Inter-Nation, the Trans-Culture, and the Theory-Practice Dyad

Chair: Youn Kim (The University of Hong Kong)

Thomas Hodgson (University of California, Los Angeles)

Lamtharn "Hanoi" Hantrakul (Winner of AI Song Contest 2022)

Angus Lee (The University of Hong Kong)

Mark Simos (Berklee College of Music)

PANEL 2:

2:00PM-4:40PM

Critical Approaches to “Creative AI”:

Philosophical, Historical, and Musicological Interventions

Chair: Boris Babic (The University of Hong Kong)

Nate Sharadin (The University of Hong Kong)

Deirdre Loughridge (Northeastern University)

Nikita Braguinski (RWTH Aachen University)

Giorgio Biancorosso (The University of Hong Kong)

Google DeepMind; Mila - Quebec AI Institute; Massachusetts Institute of Technology

Advances in generative modelling have opened up exciting new possibilities for music making. How can we leverage these models to support human creative processes? First, I’ll illustrate how we can design generative models to better support music composition and performance synthesis. Coconet, the ML model behind the Bach Doodle, supports a nonlinear compositional process through an iterative block-Gibbs like generative procedure, while MIDI-DDSP supports intuitive user control in performance synthesis through hierarchical modelling. Second, I’ll propose a common framework, Expressive Communication, for evaluating how developments in generative models and steering interfaces are both important for empowering human-ai co-creation, where the goal is to create music that communicates an imagery or mood. Third, I’ll introduce the AI Song Contest and discuss some of the technical, creative, and sociocultural challenges musicians face when adapting ML-powered tools into their creative workflows. Looking ahead, I’m excited to co-design with musicians to discover new modes of human-ai collaboration. I’m interested in designing visualizations and interactions that can help musicians understand and steer system behaviour, and algorithms that can learn from their feedback in more organic ways. I aim to build systems that musicians can shape, negotiate, and jam with in their creative practice. Music probably also arose as soon as it could as something like the simple speech or song of the everyday communicative interactions of Homo sapiens — a form of "music" that creates and sustains our species' sociality by underpinning the sense of mutual affiliation that happens when we communicate with one another without seeking to dominate.

Anna Huang is a research lead on the Magenta project at Google DeepMind, spearheading efforts in Generative AI for Musical Creativity. She also holds a Canada CIFAR AI Chair at Mila—the Québec Artificial Intelligence Institute. Her research is at the intersection of machine learning and human-computer interaction, with the goal of supporting music making and more generally the human creative process. She is the creator of the ML model Coconet that powered Google’s first AI Doodle, the Bach Doodle. In two days, Coconet harmonized 55 million melodies from users around the world. In 2018, she created Music Transformer, a breakthrough in generating music with long-term structure, and the first successful adaptation of the Transformer architecture to music. Her ICLR paper is currently the most cited paper in music generation. She was a judge then organizer for the AI Song Contest for three years. She was a guest editor for ISMIR’s flagship journal,TISMIR's special issue on AI and Musical Creativity. This Fall, she will be joining Massachusetts Institute of Technology (MIT) as faculty, with a shared position between Electrical Engineering and Computer Science (EECS) and Music and Theater Arts (MTA).

KTH Royal Institute of Technology, Stockholm, Sweden

The world will soon be awash with music of a different kind: music generated by artificial intelligence (AI) that is impossible to distinguish by sound alone from music created by people. Algorithmic approaches to music composition and automated music performance have been topics of interest for centuries (e.g., see Arca Musarithmica (1650) and the "Book of Ingenious Devices" of Ahmad, Muhammad and Hasan ibn Musa ibn Shakir (850)). However, the contemporary confluence of digitized and accessible music data, along with computational resources and efficient machine learning algorithms, is making possible the wholesale creation of new music audio catalogues at a fraction of the cost from human labor and intellectual property rights. Dozens of AI-music generation services have arisen since 2015 to take advantage of this. As the CEO of one of these services remarked in 2022: "What does a hundred billion [AI-generated] songs per year look like, and how does that shift the market? Whoever is there first and whoever is doing that --- the spoils on the other side of that thing are just going to be ridiculous." My talk considers this future, asking whether it is bleak or not, and how we might prepare for it by establishing a new discipline: AI Music Studies.

Bob L.T. Sturm is Associate Professor of Computer Science in the Speech, Music and Hearing research division of the School of Electrical Engineering and Computer Science at the Royal Institute of Technology in Stockholm, Sweden. Originally, Sturm is from the USA, growing up in the hinterlands of Denver, Colorado. He received a PhD degree in Electrical Engineering from the University of California, Santa Barbara in 2009. He has degrees in physics (CU Boulder), computer music (CCRMA, Stanford), and multimedia engineering (Media Arts and Technology, UCSB). Sturm is the PI of the ERC-funded project, MUSAiC: Music at the Frontiers of Artificial Creativity and Criticism (https://musaiclab.wordpress.com). The project, running 2020-2025, is focused on how artificial intelligence is disrupting and transforming our relationship to music, and pays particular attention to the frictions that arise when this technology meets traditional music practices. Sturm is the chair of the First International Conference on AI Music Studies, occurring December in Stockholm Sweden. Sturm also plays Irish traditional music on accordion.

University of California, Los Angeles

This paper explores the politics of socio-algorithmic “bordering” in global music streaming services, particularly as they map onto, across, and between national borders in South Asia and beyond. The talk employs a hybrid methodological approach: digital methods grounded with ethnographic study, paying close attention to the borders between the empirical and theoretical, quantitative and qualitative. The paper calls for continued interdisciplinarity and collaboration between ethnography and techniques of data science, particularly as streaming technologies surge online across the globe.

Thomas Hodgson is an ethnomusicologist who studies algorithms and AI in global contexts, with a particular focus on Pakistan and the Pakistani diaspora. He is currently finishing a book – Journeys of Love: Kashmiris, Music, and the Poetics of Migration – which explores questions of memory and exchange among musicians in Pakistan-controlled Kashmir. Outside academia, Thomas co-founded the music technology platform Tigmus (This is Good Music). The company, which came to represent over 900 venues and 4000 artists, made use of data from streaming and social media platforms such as Spotify, YouTube and Facebook to tell artists optimally where and when they should perform. He is also a practicing musician and composer, playing the trumpet, keys and various other instruments in Stornoway, an indie folk band with three UK top-20 albums. Thomas recently collaborated with composer Edward Nesbit to produce an album in response to the Covid-19 pandemic. The album – Aenigmata –was shortlisted for the 2019 RMA Tippett Medal.

Winner of AI Song Contest 2022

In this talk, I’ll be combining machine learning, music and culture together to discuss how we can develop AI systems which encompass a wide range of musical traditions. I’ll be drawing examples from my own technical research as an AI Research Scientist (e.g. DDSP), artistic work as a composer (AI Song Contest 2022 Winner) and weave perspectives based on modern approaches (e.g. LLM’s) and historical-cultural turning points (e.g. invention of the wax cylinder). I hope to inspire listeners to seek similar connections between machine learning and pan-Asian musical cultures, and ask the right questions about the future of music AIGC in the Asia-Pacific.

Lamtharn Hanoi Hantrakul, a.k.a “Yaboi Hanoi”, is an award-winning cultural technologist, composer and AI Research Scientist born and raised in Bangkok, Thailand. He is the winner of the 2022 International AI Song Contest, where his entry “อสุระเทวะชุมนุม - Enter Demons and Gods” reimagined melodies and tuning systems fromSoutheast Asia using the power of modern audio machine learning. Hanoi has co-invented breakthrough audio AI technologies including the open-source Differentiable Digital Signal Processing (DDSP) library at Google Brain, Tone Transfer and the realtime neural audio engine behind TikTok's MAWF plugin. Yaboi Hanoi innovates at the confluence of technical, artistic and cultural dimensions; striking a delicate balance between the modern and traditional; respecting and challenging Southeast Asian culture through syntheses of music, dance, design and tech.@yaboihanoi on all socials.

The University of Hong Kong

What does the notion of 'play' entail in music? Departing from my experience both as an instrumentalist and a composer, I outline and propose an analytic framework where I suggest the inextricable link between play and creativity in human-made music. In conceptualising subversion as an essential part of creativity, I also attempt to speculate on whether musical algorithms or artificial intelligence are capable of being truly' creative.'

Angus Lee is one of the most versatile performer-composers of his generation, and is a graduate of the Hong Kong Academy for Performing Arts and the Royal Academy of Music. Lee has performed in solo and chamber recitals organised by the LCSD's ''Our Music Talents'' series (2018) and ''City Hall Virtuosi'' series (2023), CityU's Arts Festival (2021) and HKUST's Metropolis Festival (2021-22). He has performed as soloist at composer Pierre Boulez's 90th birthday celebration concert series at the Lucerne Festival. Lee's compositions have been performed by Ensemble Modern, Ensemble Multilaterale, Ensemble Intercontemporain, and Klangforum Wien, often with him as conductor; such was the case with his first opera, ChasingWaterfalls, commissioned for the season opening of Germany's Semperoper Dresden in 2022. The work also subsequently toured to Hong Kong's New Vision Arts Festival. Currently, he is working on an orchestral piece for the Hong Kong Philharmonic Composers' Scheme.

Berklee College of Music

When developers apply new technologies to creative work, they embed models and metaphors for that work in architectures and applications, which can in turn begin to re-shape—for better or worse—both the creative work and artists' and audiences' relationships to that work. Many models of songwriting process and practice reflected in AI/songwriting projects are technology-forward, i.e.,structured with AI already in mind. This talk proposes adapting the Songwriter's Compass, a songwriting process model originally intended for songwriters and educators, as a more technology-agnostic, granular frame work for documenting hybrid human/AI songwriting. The model does not prescribe a normative sequence of activities, but supports fine-grained descriptions of songwriters' diverse creative practices. Yet it can also suggest interventions in practice, such as unexpected strategies to advance songwriting skills, scope, and versatility. Extending the Compass model for "AI in the loop" projects could facilitate more consistent and comparable project descriptions, while suggesting new human/AI interactions and applications.

Mark Simos, acclaimed songwriter, composer, guitar accompanist and fiddler, is Professor of Songwriting at Berklee College of Music (Boston). His over 200 song covers include Grammy-winning tracks by Alison Krauss and Molly Tuttle, and span genres from bluegrass (Del McCoury, Ricky Skaggs) to rock (Australian icon Jimmy Barnes). At Berklee, Mark has created innovative teaching methods for songwriting, including the Songwriter's Compass model, and curriculum for listener-centered critique, guitar techniques for songwriters, and song/tune composition in roots styles. He is author of the Berklee Press books Songwriting Strategies: A 360º Approach and Songwriting in Practice. Mark also leads Berklee's annual Songs for Social Change Contest, and has brought that experience to his recent work as a judge for AI song contests. In prior work in software research, he pioneered domain modeling methods employing semantic-network techniques, work that informs his critical perspectives on current AI/music applications

The University of Hong Kong

Artificial systems can create wondrous aesthetic objects, including musical compositions, visual art, and written work. But are these systems and their products creative? That depends on the correct view of aesthetic creativity; philosophers predictably disagree over what, exactly, creativity comprises. Here, I explain how metanormative pragmatists like myself think about this issue and the accompanying disagreement over whether artificial systems can be creative. In general, rather than attempting to provide necessary and sufficient conditions for a normative concept, metanormative pragmatists offer an etiology for the concept and an explanation for its continued use in terms of the functional role it serves. On this view, questions of whether artificial systems are (or can be) creative amount to questions of whether, and how, to commend these systems, and their products, to our use.

Nate Sharadin is an Assistant Professor of Philosophy at the University of Hong Kong. His research is on normative issues in ethics and epistemology. He's currently writing a book on the nature and importance of artificial achievements.

Northeastern University

“Robots Can Make Music, but Can They Sing?” read the 2021 New York Times headline about the second international AI Song Contest. The headline reiterates a habit dating back to the 1750s, of drawing a boundary between human and machine and (re)defining the former by what the latter cannot do. In the mid twentieth century, the concept of creativity crystallized as existing on the human side of that boundary, and AI as machinery destined to take on the remaining bastions of the uniquely human. In my book Sounding Human, I examine eighteenth-century and more recent musical engagements with “human” and“machine” that provide alternatives to these prevailing concepts of AI and creativity, including musicians’ engagements with machine learning around 2020. Drawing on those examples, this talk reflects on the “crossroads” represented by our current moment, particularly with respect to how we understand what AIis and can do in music-making.

Deirdre Loughridge is a musicologist and Associate Professor in the Department of Music at Northeastern University. Her research broadly concerns the history of music, science, and technology, and has been published in journals and edited volumes including Eighteenth-Century Music, Journal of the Royal Musical Association, Resonance: The Journal of Sound and Culture and The Oxford Handbook of Timbre. With Elizabeth Margulis and Psyche Loui, she is co-editor of the interdisciplinary book The Science-Music Borderlands: Reckoning with the Past andImagining the Future (MIT Press, 2023). With Thomas Patteson, she is co-curator of the Museum of Imaginary Musical Instruments. Her newest book Sounding Human: Music and Machines 1740/2020 was published by University of Chicago Press in December 2023, and explores how musical artifacts have been—or can be—used to help explain and contest what it is to be human historically and today.

RWTH Aachen University

Data collected by online music streaming companies is a unique new source for music-related research. It constitutes a novel kind of measurement of listening behavior which might possibly (through its scope and granularity) trigger a paradigm shift in the study of music-related topics by enabling the discussion of previously unanswerable questions. However, the power disbalance between the data-owning company and the researcher asking for access might hinder productive and open scholarly work. In this presentation, I will discuss possible ways forward for the interaction between academia and the music streaming industry, including collaboration (via an intermediary body of institutions) and donation-based practices.

Nikita Braguinski is a musicologist and media theorist. His current book “Mathematical Music. From Antiquity to Music AI” (Routledge, 2022) was recently translated into Korean, receiving the Sejong book prize in 2023. He was a Fellow at Harvard University, a Visiting Scholar at the University of Cambridge, and a Researcher at Humboldt University of Berlin with funding from the Volkswagen Foundation. In 2023, he co-convened, together with Eamonn Bell and Miriam Akkermann, the ZiF Bielefeld Visiting Research Group “The Future of Musical Knowledge in the Age of Machine Learning”. Nikita Braguinski is currently a Fellow at the KHK Cultures of Research at the RWTH Aachen University.

The University of Hong Kong

The use of playback tools as means for creating new music has opened the world of composition to countless individuals who do not excel in the traditional skills of reading notation, mastering an instrument, arranging or writing counterpoint. AI music generation would seem to provide a fertile terrain for the continuation of this trend. Yet the cloud is inundated with music that is new only in the restricted sense of not being liable for plagiarism. One is reminded of the silent cinema accompanist who, prompted by a scene (“the input”), draws on a stock of formulas (“the database”) to craft what is essentially pastiche. Take, by contrast, the work of DJs and compilers working with samples. To be accessible at all, samples must have been recorded and indexed. Like the tool set of the bricoleur, they constitute a closed system. But that is not a hindrance to the creative impulse — to the contrary. Remixes and mash-ups transform already-existing music by drastically altering its conditions of reception via the manipulation, miniaturization and reconfiguration of both signaled and unsignaled samples. So do compilation soundtracks by splicing music to moving images and novel dramatic situations.“We have no more beginnings,” the opening line of George Steiner’s Grammars of Creation, itself a heady remix of much of the world’s literature, need not sound like the lament it was meant to be.

Giorgio Biancorosso is Professor of Music at The University of Hong Kong. His work investigates the boundaries of music and sound in the theater, cinema and digital media. He is the author of Situated Listening: The Sound of Absorption in Classical Cinema (Oxford University Press, 2016) and Remixing Wong Kar Wai: Music, Bricolage, and the Aesthetics of Oblivion (Duke University Press, forthcoming). Biancorosso is the co-founder and editor of the journal SSS (Sound-Stage-Screen) and the co-editor, with Roberto Calabretto, of Scoring Italian Cinema: Patterns of Collaboration (Routledge, forthcoming). Biancorosso is also active as a programmer, dramaturg, and stage director.